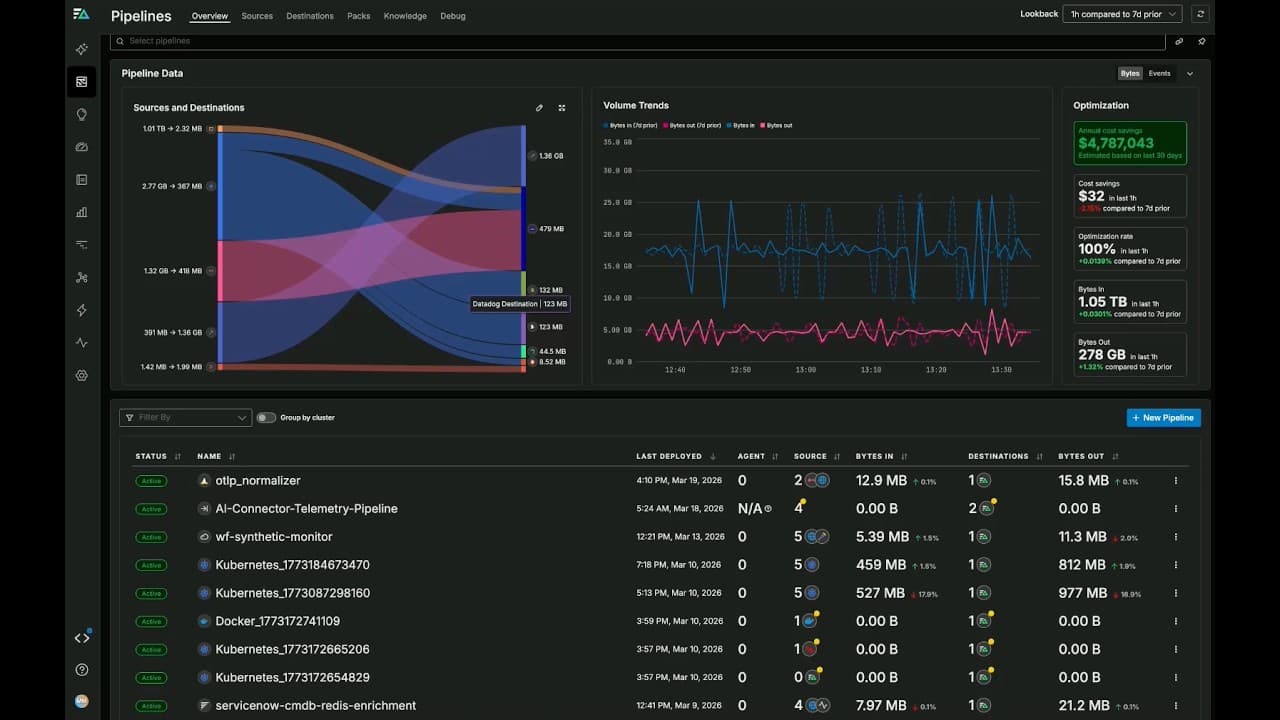

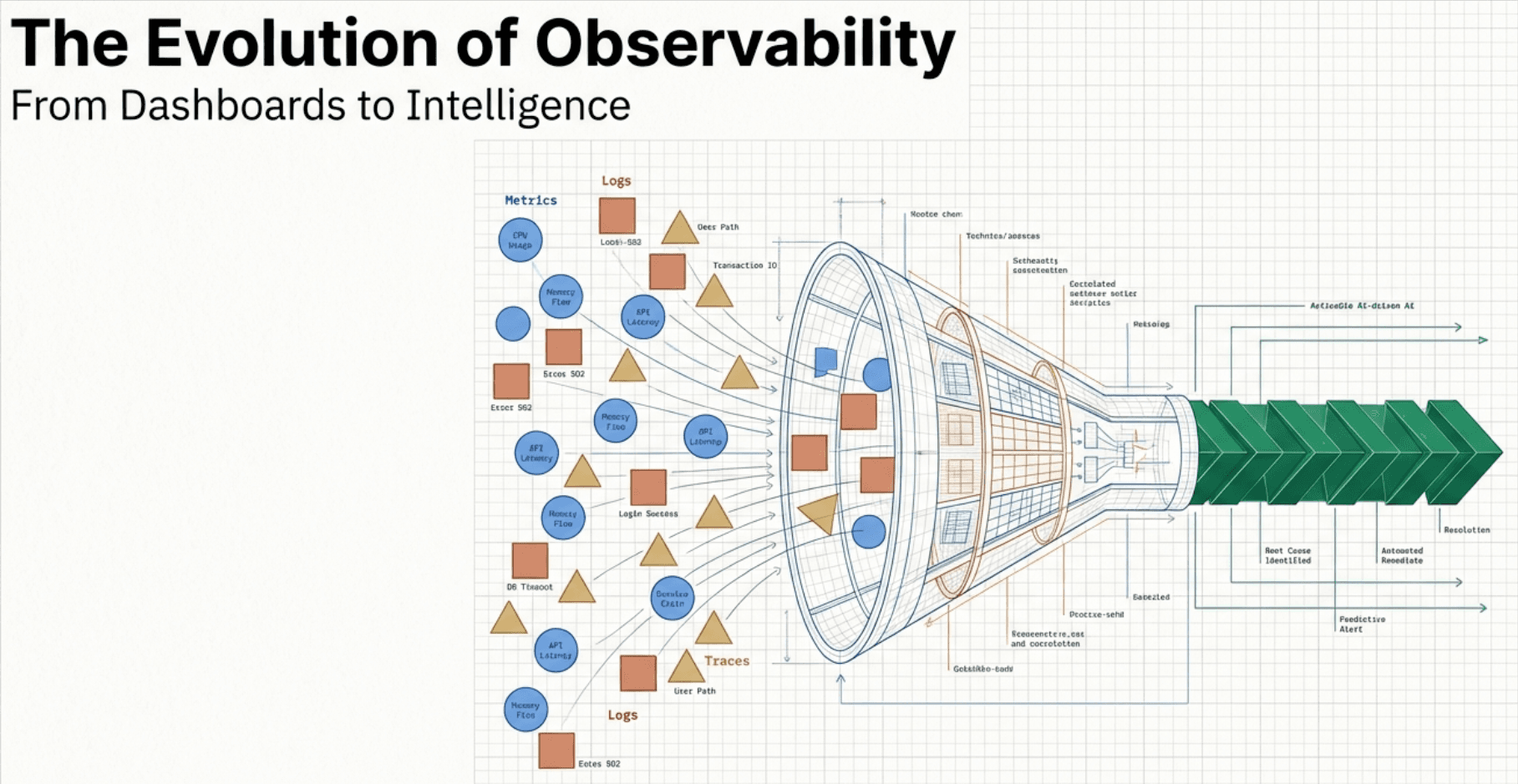

Is your observability platform powerful but shockingly expensive? As systems grow more complex, the cost to manage them often spirals out of control. In this talk, Tim George from Integration Plumbers walks through practical strategies to reduce OpenTelemetry implementation complexity and cut data costs — without sacrificing visibility.

What You'll Learn

- The Cost Challenge: Why observability costs spiral and how to frame the problem

- Simplification Strategies: Using Auto-Instrumentation and the OTLP protocol for efficiency

- The Cardinality Problem: Understanding high vs. low cardinality and its impact on cost

- Sampling 101: Converting excessive data into manageable, meaningful volumes

- Sampling Types: Head Sampling, Rate Limiting (server-side), and Tail-Based Sampling

Video Chapters

- 0:00 – Introduction: The OTEL Cost Challenge

- 0:55 – The Game Plan: 4 Steps to Optimization

- 1:21 – Strategy 1: Simplify Implementation

- 1:47 – Auto-Instrumentation vs. Manual

- 2:05 – Centralizing Logic in OTEL Collectors

- 2:38 – Standardizing Naming Conventions

- 2:56 – Controlling Cardinality

- 3:36 – Strategy 2: Taming the Data Firehose

- 4:10 – Head Sampling (Client Side)

- 4:29 – Rate Limiting Sampling

- 4:47 – Tail-Based Sampling (Smart Policies)

- 5:17 – Summary: Your Optimization Toolkit