Introduction: The Test That Can't Fail in Production

Your custom OpenTelemetry collector is working. It polls the Rivian API every five minutes, transforms vehicle state into OTEL metrics, and exports cleanly to your Prometheus backend. You've tested it against a real vehicle. The dashboards look great.

Then you deploy it across a 200-vehicle fleet. Within the first week, a vehicle's battery hits 0% while in transit. Your collector dutifully records vehicle.battery.level: 0, but the alert that was supposed to fire never does because your threshold logic silently swallows the edge case. The operations team doesn't find out until the driver calls in from the side of the road.

You never tested that scenario because you couldn't. Draining a real Rivian battery to zero on demand is expensive, impractical, and potentially dangerous. And your unit tests, which mocked the API response with a hardcoded JSON blob, never exercised the state transitions that lead to a zero-charge condition over time.

This is the gap that simulation testing fills. And for teams building OpenTelemetry collectors against external APIs, it's the difference between confidence and hope.

In this post, we'll walk through how we built a fully self-contained Rivian API simulator in Python, why it catches bugs that mocks and stubs miss, and how to integrate it into your development and CI/CD workflows. We'll continue using our example customer SparkPlug Motors, a fictional auto parts company operating a fleet of 200 Rivian vehicles.

The Testing Pyramid Has a Blind Spot

If you've built integrations against external APIs, you're familiar with the standard testing toolkit: unit tests with mocks, integration tests with stubs, and maybe some contract tests. These tools are valuable, but they share a fundamental limitation when it comes to testing OpenTelemetry collectors for fleet-scale systems.

Stubs return static data. They're fast and simple, but they tell you nothing about how your system behaves when state changes over time. A stub that returns battery_level: 85 will always return 85. It won't simulate the gradual drain from 85 to 5 that your alerting logic needs to handle.

Mocks verify that your code makes the right calls in the right order. Excellent for unit tests, but they still return canned data. A mock won't reveal that your collector silently drops metrics when the API returns a field your code hasn't seen before.

Contract tests validate the shape of API responses. They catch schema mismatches early, but they don't exercise the behavioral patterns that cause production failures: state transitions, timing-dependent bugs, and cascading effects across a fleet of vehicles.

The simulator sits at the highest fidelity level. It maintains vehicle state in a real database, battery levels change based on simulated driving patterns, and GPS positions are constrained by actual geofence polygons. The trade-off is complexity, but the bugs you catch at this level are the ones that would otherwise only surface in production.

| Approach | State Over Time | Fault Injection | Realistic Data | Speed |

|---|---|---|---|---|

| Mocks | No | No | No | Fast |

| Stubs | No | Limited | Limited | Fast |

| Contract Tests | No | No | Schema only | Fast |

| Simulation | Yes | Yes | Yes | Moderate |

Inside the Rivian API Simulator

The Integration Plumbers Rivian API Simulator is a utility we developed to expedite the testing and development of our OpenTelemetry collectors and the alerting infrastructure built on top of them. The goal was twofold: verify that our collectors perform as expected, and inject fault states to simulate conditions at scale.

Architecture Overview

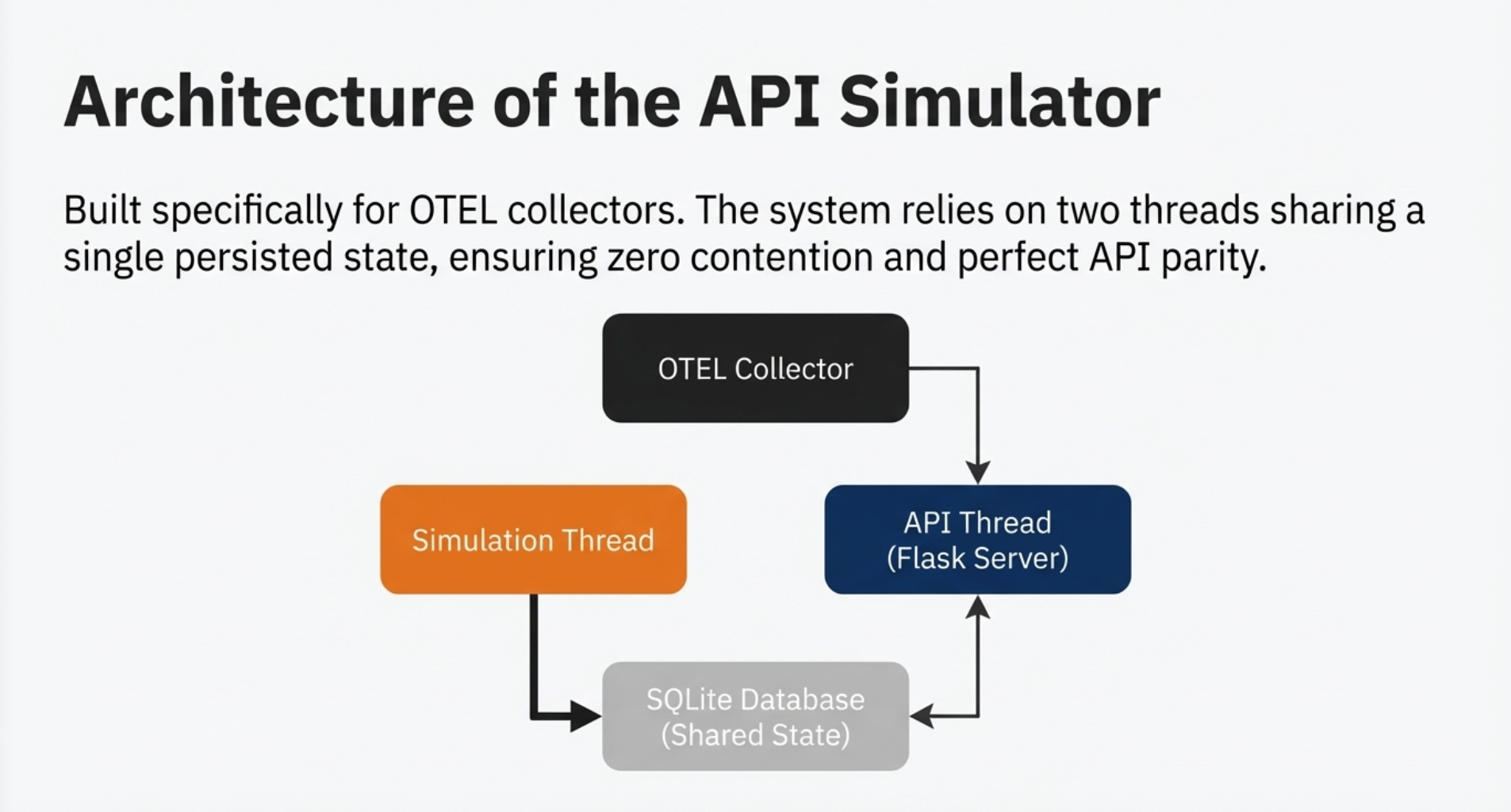

The simulator runs two threads sharing a single RivianSimulator instance:

- Simulation Thread: Advances the fleet state each tick. Vehicles move, batteries drain, charging sessions begin and end, and positions update within geofence boundaries.

- API Thread: Runs a Flask server that mirrors the real Rivian GraphQL API surface. Any client that talks to the Rivian API can point at the simulator without modification.

State is persisted in SQLite, so fleet data survives restarts. A vehicle movements table logs every position and battery state change throughout the simulation, the same historical data that OpenTelemetry collectors use for trend analysis.

# Entry point: two threads sharing one simulator instance

def main():

simulator = RivianSimulator(db=DatabaseHandler(), geofence=Geofence())

stop_event = threading.Event()

sim_thread = threading.Thread(target=run_sim, args=(simulator, stop_event))

api_thread = threading.Thread(target=run_api, args=(simulator, stop_event))

sim_thread.start()

api_thread.start()The threading model is intentionally simple. The Flask API serves data from memory, and the simulation thread generates data quickly but infrequently, resulting in very low contention between the two.

How the Simulation Loop Works

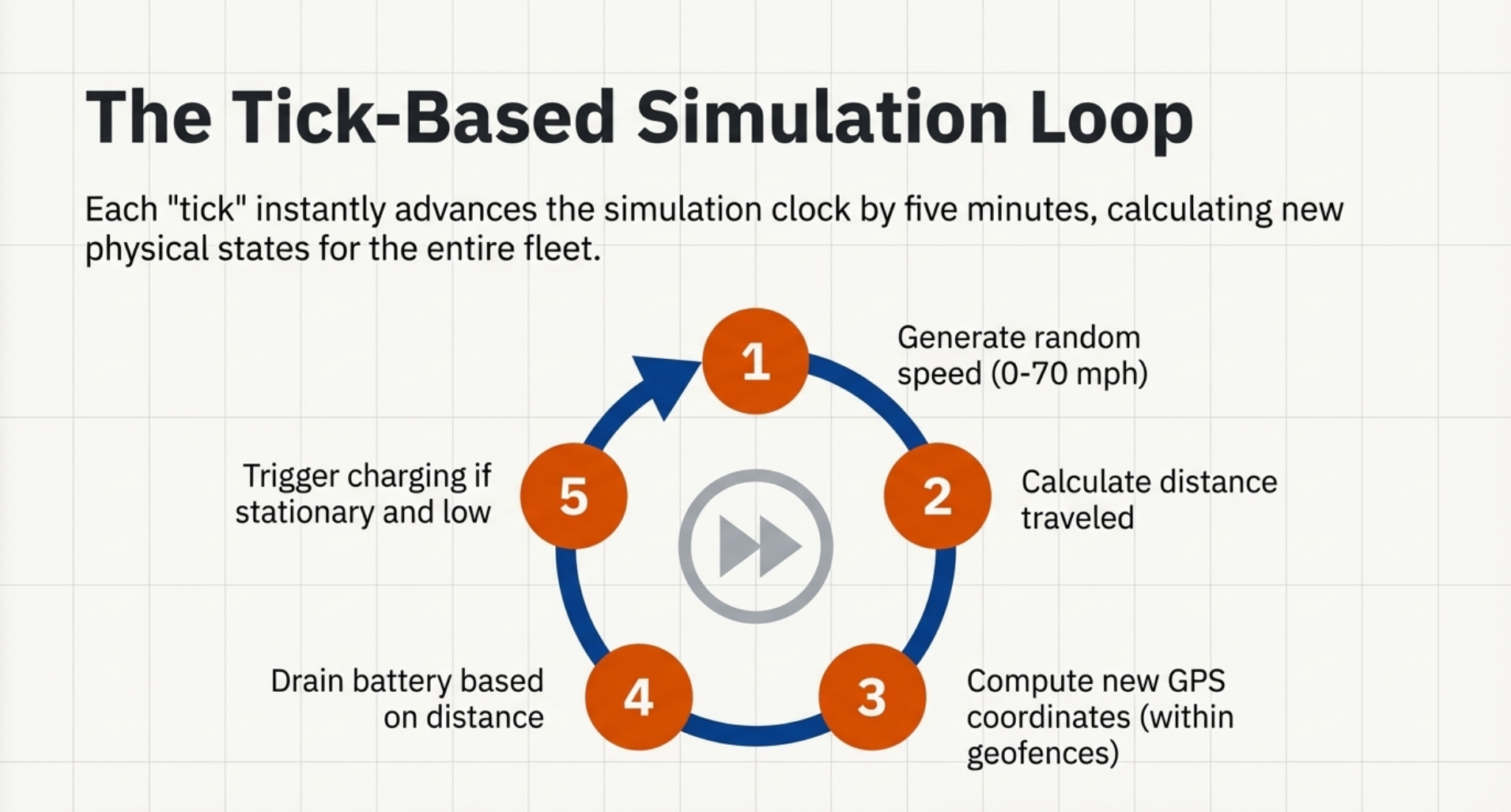

Each tick advances the simulation clock by five simulated minutes. For every vehicle in the fleet:

- A random speed between 0 and 70 mph is generated

- Distance traveled is calculated from the speed and time interval

- A new GPS position is computed within geofence boundaries

- Battery consumption is derived from distance traveled, based on Rivian performance characteristics

- If the vehicle is stationary and below a charge threshold, a charging session begins

# Simplified tick loop

for vehicle in self.vehicles:

speed = random.uniform(0, 70)

distance = speed * (TICK_SECONDS / 3600)

new_position = self.geofence.random_position_within(

vehicle.lat, vehicle.lon, distance

)

vehicle.apply_travel(distance, new_position)

vehicle.apply_charging()

vehicle.save_movement(self.db)This loop runs extremely fast. Each iteration produces five minutes of simulated activity, so generating hours of fleet data takes seconds. That speed is critical for CI/CD integration. You can't afford a test suite that takes 30 minutes to produce enough data.

Matching the Real API Surface

The simulator mirrors every GraphQL operation known to the Rivian CLI tool. The match/case syntax in Python provides clean routing:

match operation_name:

case "getUserInfo":

return self.handle_user_info()

case "GetVehicle":

return self.handle_get_vehicle(variables)

case "getVehicleState":

return self.handle_vehicle_state(variables)

case "getLiveSessionHistory":

return self.handle_charging_sessions(variables)

# ... full coverage of known routesThe vehicle state response includes over 60 fields, everything from GPS coordinates and battery level to door lock status and tire pressure. We focused simulation data generation on the fields that matter most for fleet management (battery, charging, GNSS) while using the Faker library to generate semantically valid data for the rest.

This ensures that our collectors can parse the full response shape without errors, even for fields we're not actively monitoring. When the real Rivian API adds new fields through an over-the-air update, the simulator can be extended without restructuring anything.

Why Simulation Testing Matters for OTEL Collectors

Simulation testing grants you the ability to control the state of external dependencies that you don't actually control. For OpenTelemetry collectors that poll third-party APIs, this changes everything about how your team develops and how confidently you deploy.

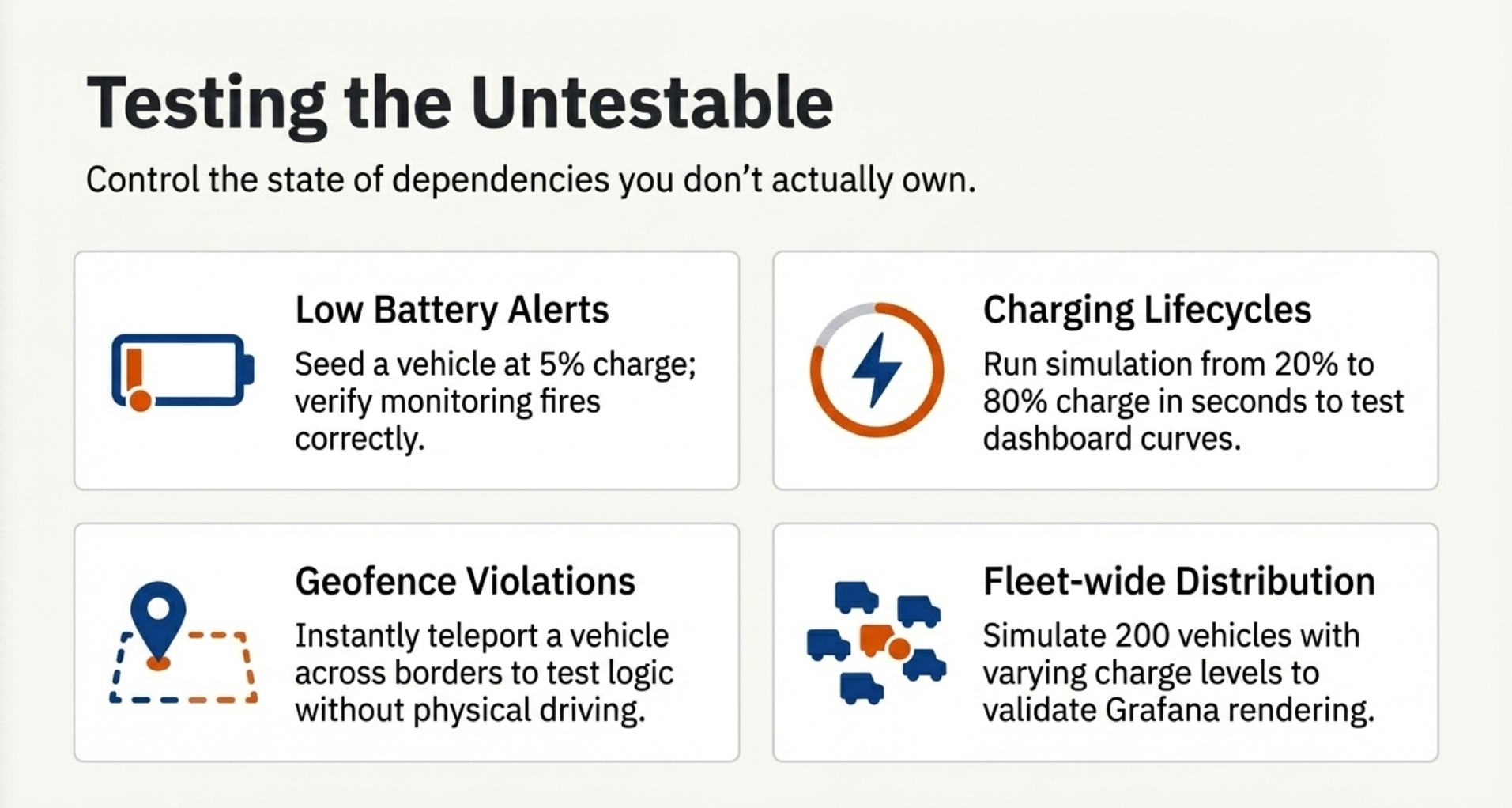

You Can Test the Untestable

Consider these scenarios that SparkPlug Motors needs to validate before production deployment:

Low battery alert. Seed a vehicle at 5% charge and verify that your monitoring system fires the correct notification. With the real API, you'd need to drive a truck until the battery nearly dies. With the simulator, it's a database update.

Charging session lifecycle. Start a vehicle at 20% charge, run the simulator forward until it hits 80%, and verify that your dashboards and OTEL metrics update correctly through the entire charging curve. In the real world, this takes hours. In simulation, it takes seconds.

Geofence violation. Set a vehicle's position outside the continental US boundary and confirm that your logic triggers appropriately. Against a real vehicle, this requires physically driving across a border.

Fleet-wide battery distribution. Simulate 200 vehicles with varying charge levels to validate that your Grafana dashboards correctly render fleet-wide battery state-of-charge distributions. No mock can produce this data realistically.

Each of these would be extremely difficult or impossible to test against the real API. With the simulator, they're trivial to set up and repeat.

You're Free From External Constraints

The real Rivian API imposes practical limitations that slow development:

- Rate limits. Hit the API too aggressively and your API key gets locked. Restoring it requires a call to Rivian tech support. The simulator has no rate limits.

- Two-key restriction. Rivian allows only two API keys per vehicle. Drivers consume one with their phone app, leaving one for your collector. The simulator generates keys for all 200 vehicles instantly.

- Network dependency. Your tests don't fail because Rivian had an outage or your internet dropped. The simulator runs on localhost.

- Cost. Real API calls against real vehicles consume real resources. The simulator costs nothing to run.

You Can Control Time

This is one of the most powerful advantages. The tick-based simulation model lets you compress months of fleet activity into minutes. Need to validate trend analysis dashboards over 90 days of data? Run 26,000 ticks. Need to test how your retention policies handle data aging? Generate it on demand.

You can also script event sequences (a vehicle drives for two hours, parks, charges to 80%, then drives again) and replay them deterministically. This is essential for regression testing: if a collector update breaks a specific scenario, you can reproduce it exactly.

Integrating the Simulator into CI/CD

The simulator was designed to be a CI/CD-native tool from the start. Because it's self-contained with zero external dependencies, integrating it into your pipeline is straightforward.

The Basic Pattern

# Example CI/CD pipeline step

test-otel-collector:

steps:

- name: Start simulator

run: |

cd ip-rivsim

python main.py &

sleep 5 # Wait for Flask to initialize

- name: Run integration tests

run: |

pytest tests/integration/ \

--rivian-endpoint=http://localhost:5050 \

--sim-ticks=5000

- name: Verify OTEL metrics

run: |

# Check that expected metrics appear in test backend

python verify_metrics.py \

--expected=vehicle.battery.level \

--expected=vehicle.range.remaining \

--expected=vehicle.speedYou start the simulator as a background service, run your integration tests against localhost:5050, and tear it down when you're done. Because there's no network dependency, your pipeline is deterministic.

Containerized Deployment

For teams using Docker-based CI/CD, the simulator ships as a container image built on Python 3.14 Slim. The key design decision is a volume mount for the examples directory, which contains both the SQLite database and the geofence points file. This means simulation state persists across container restarts, and you can reset the database by simply replacing the file.

# docker-compose.yml

services:

rivsim:

build: .

ports:

- "5050:5050"

volumes:

- ./examples:/app/examples

restart: unless-stoppedNo Python version conflicts, no dependency issues, no environment drift between developers.

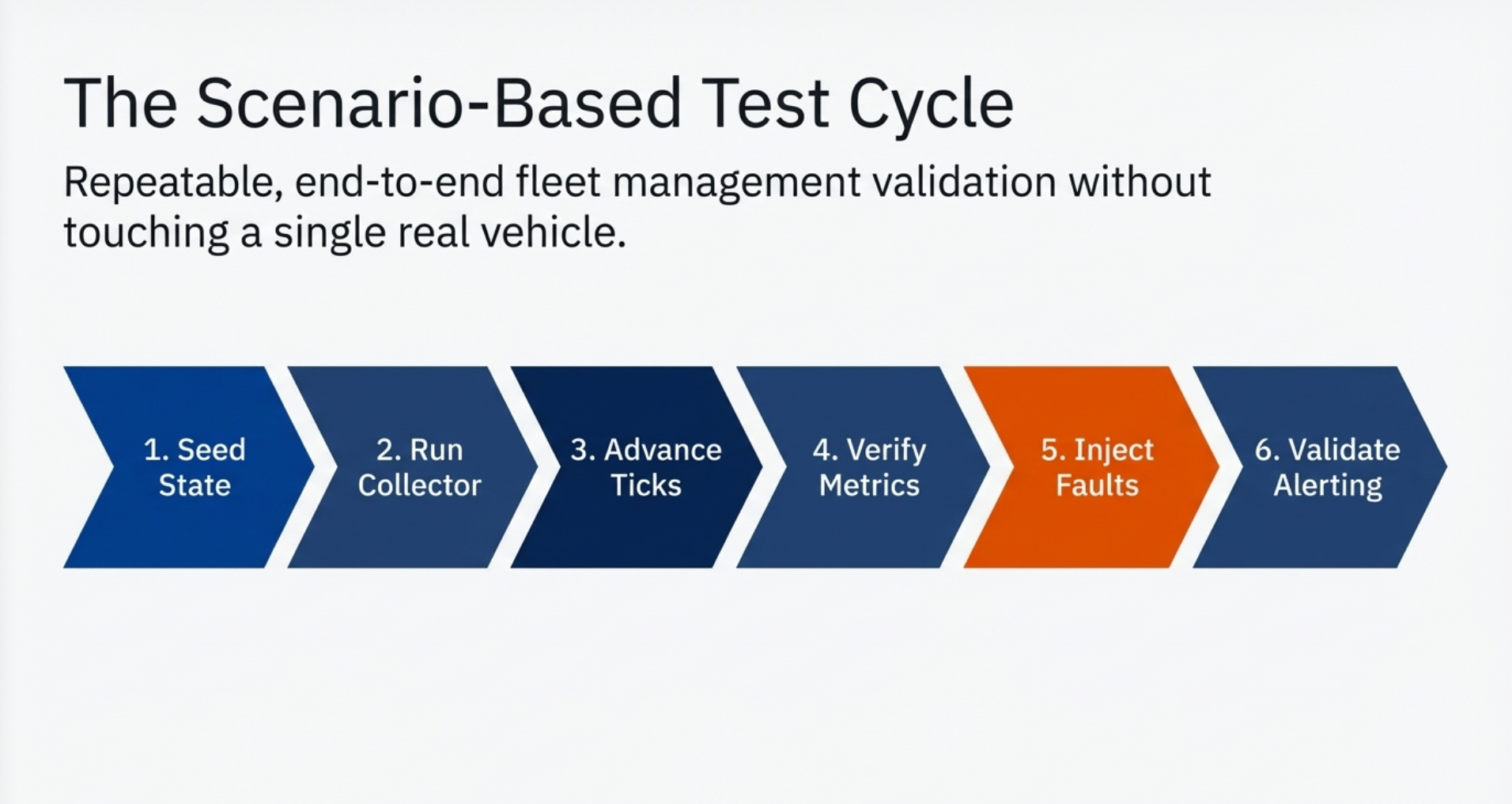

Scenario-Based Test Suites

The most valuable tests are the ones that exercise specific fleet management scenarios end to end:

- Start the simulator with a known seed state

- Run the OTEL collector pointed at the simulator

- Advance the simulation for a controlled number of ticks

- Verify metrics appear correctly in your test observability backend

- Inject fault conditions: database errors, malformed API responses, network timeouts

- Validate alerting: confirm that critical conditions trigger the right notifications

All of this happens without touching a single real vehicle, and you can repeat the entire cycle as often as you need.

The Onboarding Advantage

This comparison speaks for itself:

| Without Simulator | With Simulator | |

|---|---|---|

| New developer setup | Days to weeks (API access, credentials, test vehicle provisioning) | Clone repo, run python main.py |

| Available test vehicles | 1-2 (shared across the team) | 200 (per developer) |

| Fault testing | Manual, risky, often impossible | Scripted, repeatable, safe |

| CI/CD integration | Flaky (external dependency) | Deterministic (localhost) |

| Cost | Real API calls against real vehicles | Zero |

For SparkPlug Motors, this kind of onboarding speed is a significant competitive advantage. A new engineer is productive against the fleet management stack on day one, not after a week of provisioning and credential management.

Is this approach specific to Rivian, or can it work with other vehicle APIs?+

The pattern is completely portable. Tesla's REST API, Ford Connect, GM's OnStar API. Each has a different interface, but the simulator architecture (threaded state engine + API mirror + persistence layer) applies to all of them. You'd replace the GraphQL routing with REST endpoints, adjust the vehicle state model, and update the simulation physics. The testing methodology and CI/CD integration remain identical.

How realistic does the simulation need to be?+

It depends on what you're testing. For collector validation (parsing, metric mapping, export), basic state simulation is sufficient. For alerting and threshold testing, you need realistic state transitions: batteries that drain over time, not batteries that jump from 100% to 5%. For capacity planning, you need realistic scale. We simplified Rivian's charging curve for our purposes, but the architecture supports more nuanced models when needed.

What about testing against schema changes from OTA updates?+

This is one of the strongest arguments for simulation testing. You can add new fields to the simulator's response model before the real API changes, verifying that your collector handles unknown attributes gracefully. OpenTelemetry's attribute-based data model is designed for exactly this kind of extensibility. New fields become new attributes without breaking existing pipelines.

Can the simulator handle load testing at fleet scale?+

Yes. With 200 vehicles generating state every tick, you can validate that your OTEL collector pipeline handles the expected throughput. For SparkPlug Motors, that's 200 vehicles x 60+ fields x 12 polls/hour = 144,000+ data points per hour. The simulator generates this data locally without network overhead, so your load tests measure your pipeline's actual processing capacity, not your internet bandwidth.

Where can I find the code?+

The reference implementation is available at github.com/IntegrationPlumbers/ip-rivian-api-simulator. The repository includes the full simulator source, Docker and Kubernetes deployment manifests, and example configurations.

Ready to Build Fleet-Grade Observability?

If you're building OpenTelemetry collectors for vehicle fleets, IoT devices, or any external API, and you want to test with confidence before production, we can help.

At Integration Plumbers, we specialize in building custom OpenTelemetry collectors and the simulation infrastructure needed to develop them at enterprise scale. From Rivian fleets to industrial systems, our team has the deep integration expertise to get your observability pipeline right the first time.

Schedule a consultation with our team →

Let's discuss your fleet monitoring challenges and build a testing strategy that eliminates production surprises.